Understanding AI Security Risk Factors and Mitigation Strategies

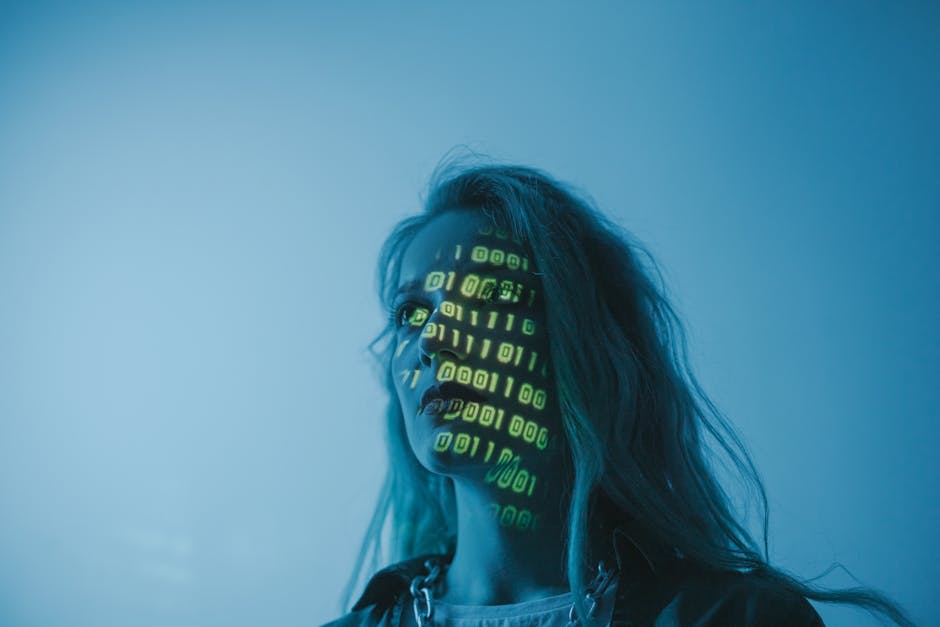

Introduction to AI Security Risks

As artificial intelligence (AI) systems become increasingly integrated into various industries, understanding the security risk factors associated with AI is crucial. These risks can threaten data integrity, privacy, and overall system reliability.

Common AI Security Risks

- Data poisoning attacks: Malicious modifications to training data can skew AI behavior.

- Model inversion: Attackers extracting sensitive information from AI models.

- Adversarial examples: Inputs crafted to deceive AI systems into making incorrect predictions.

- Privacy violations: Unauthorized access to personal data processed by AI.

Mitigation Techniques for AI Security

Implementing robust mitigation techniques is essential to safeguard AI systems. Techniques such as adversarial training, data validation, and model encryption can significantly reduce vulnerabilities.

For more details, check our guide on effective mitigation strategies for AI security.

Conclusion

With the rapid advancement of AI technology, recognizing security threats and applying appropriate mitigation techniques are vital steps to ensure safe and reliable AI deployment. Stay informed and proactive to protect your AI systems from emerging risks.