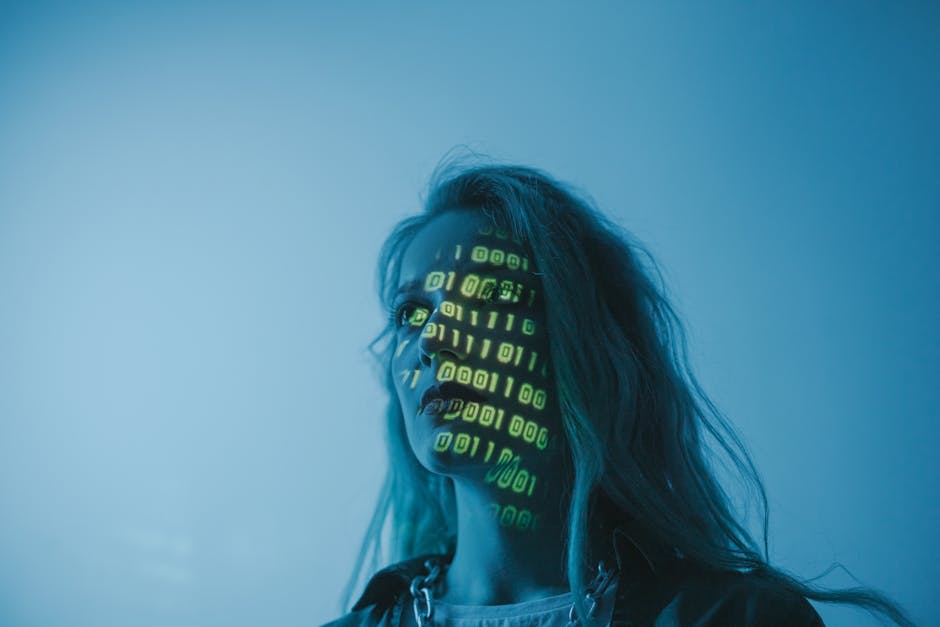

Emerging Threats in AI Security: Challenges and Future Perspectives

Understanding Emerging Threats in AI Security

Artificial intelligence (AI) has become a cornerstone of modern technology, offering innovative solutions across various industries. However, as AI systems grow more complex, new security vulnerabilities and malicious uses have started to surface.

Types of Emerging Threats

- Adversarial Attacks: Techniques to trick AI models into making incorrect predictions by subtly manipulating input data. Learn more about adversarial attacks.

- Data Poisoning: Injecting malicious data during the training phase to distort AI behavior. Read about data poisoning.

- Model Theft and Inversion: Stealing or reverse-engineering AI models to misuse or exploit. Discover more on model theft.

Potential Impacts of These Threats

These emerging threats pose significant challenges to data privacy, system reliability, and trust in AI-driven applications. For example, privacy breaches and system compromises can have serious consequences for both organizations and individuals.

Strategies to Mitigate Emerging AI Security Threats

To defend against these threats, researchers and practitioners are developing advanced defensive techniques such as robust training, continuous monitoring, and best practices. Staying informed about the latest developments in AI security research is crucial for ensuring the resilience of AI systems.